Why does trust matter more than technology?

Artificial intelligence can process massive health datasets faster than any human team. The promise is clear: earlier diagnosis, personalized treatment plans, and reduced administrative burden. Yet hospitals, insurers, and patients still hesitate to let algorithms make critical decisions. The hesitation is not about the sophistication of the models; it is about trust.

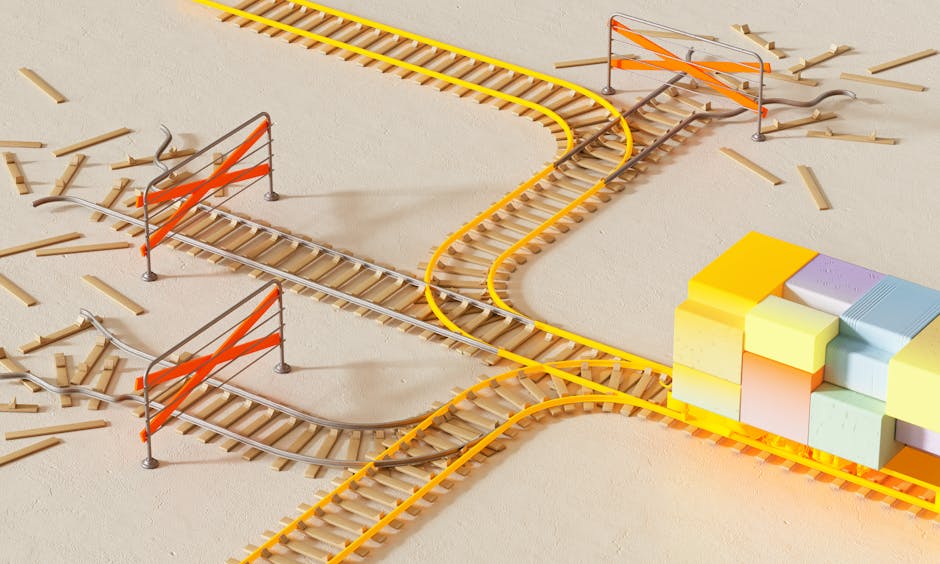

Trust in this context has three strands:

- Data integrity: Are the records accurate, complete, and up‑to‑date?

- Algorithmic transparency: Can stakeholders understand how a decision was reached?

- Governance and accountability: Who is responsible if an AI recommendation harms a patient?

When any of these strands fray, adoption stalls. The article explores the concrete obstacles that keep health‑AI projects from scaling and outlines practical steps to strengthen each trust pillar.

Data quality – the foundation that is often shaky

AI models are only as good as the data they ingest. In healthcare, data comes from electronic health records (EHRs), laboratory information systems, medical imaging archives, wearable devices, and even social determinants of health. Each source presents unique challenges.

Inconsistent coding and terminology

Different hospitals use different coding standards (ICD‑10, SNOMED‑CT, LOINC). Even within a single institution, clinicians may enter free‑text notes that vary in spelling, abbreviation, and granularity. An AI system trained on one coding set may misinterpret a new record, leading to inaccurate predictions.

Missing or incomplete records

Patients often receive care from multiple providers. If a primary‑care clinic does not receive updates from a specialty hospital, the AI model sees an incomplete picture. Missing lab results, medication histories, or imaging reports create blind spots that can erode confidence.

Data drift over time

Clinical practice evolves. New drugs enter the market, guidelines change, and disease prevalence shifts. A model that performed well on data from five years ago may produce biased outputs today. Without ongoing monitoring, performance decay becomes a hidden risk.

Regulatory landscape – navigating a moving target

Regulators worldwide are still defining how AI fits into existing health‑law frameworks. The uncertainty itself is a barrier.

United States: FDA’s Software as a Medical Device (SaMD)

The FDA classifies AI tools that provide diagnostic or therapeutic recommendations as SaMD. To obtain clearance, developers must submit evidence of safety, efficacy, and reliability. However, the agency’s approach to adaptive algorithms—those that continue learning after deployment—is still evolving. Companies must design “predetermined change control plans” that satisfy regulators while preserving the ability to improve models.

European Union: AI Act and MDR

The EU’s proposed AI Act introduces risk‑based categories for AI systems, with “high‑risk” applications subject to strict conformity assessments. In parallel, the Medical Device Regulation (MDR) requires clinical evaluation and post‑market surveillance. Aligning an AI product with both sets of requirements can be complex, especially for smaller innovators.

Data protection rules

HIPAA in the US, GDPR in Europe, and comparable statutes elsewhere set limits on how personal health information (PHI) can be used, shared, and stored. An AI project that extracts data from multiple sources must implement de‑identification or pseudonymisation techniques that satisfy these laws, adding technical and administrative overhead.

Transparency and explainability – more than a buzzword

Clinicians need to know why an algorithm flagged a patient as high‑risk. Patients want reassurance that a recommendation is not a “black box.” Achieving transparency does not mean exposing raw code; it means providing interpretable evidence that supports a decision.

Post‑hoc explanation methods

Techniques such as SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model‑agnostic Explanations) assign importance scores to input features for a given prediction. While useful, these methods can be computationally expensive and sometimes generate contradictory explanations, which can confuse rather than reassure.

Model design for inherent interpretability

Choosing simpler models—logistic regression, decision trees, or rule‑based systems—can make the reasoning process clearer. In many clinical scenarios, a modest loss in predictive performance is acceptable if the model’s logic can be reviewed and disputed.

Human‑in‑the‑loop workflows

Embedding AI as a decision‑support tool, rather than an autonomous actor, preserves clinician authority. The system presents a risk score alongside the most influential variables, allowing the doctor to confirm, modify, or reject the suggestion. This approach builds trust by keeping the final judgment with a human professional.

Accountability – who owns a wrong prediction?

When an AI recommendation leads to an adverse outcome, legal and ethical responsibility must be clear. The lack of established precedent creates hesitation among providers and insurers.

Shared responsibility frameworks

Some institutions adopt a “triad” model: the data provider guarantees data quality, the AI developer guarantees model performance, and the clinical user guarantees appropriate application. Formal agreements outline liability limits, insurance requirements, and escalation procedures.

Documentation and audit trails

Every AI interaction should be logged: input data, model version, prediction, and user action. These logs become essential evidence in case of dispute and also support internal quality‑improvement cycles.

Professional standards and guidelines

Medical societies are beginning to publish guidance on AI use. For example, the American Medical Association’s “Principles for AI in Health Care” recommends that clinicians retain ultimate responsibility for patient care and that AI tools be validated in the intended practice setting before deployment.

Integration into existing workflows – the hidden cost of change

Even a perfectly accurate, compliant AI model will fail if it disrupts daily routines. Integration challenges often appear after the technical prototype is complete.

Interoperability with EHR systems

Most hospitals use vendor‑specific EHR platforms (Epic, Cerner, Allscripts). AI services need to exchange data via standards such as FHIR (Fast Healthcare Interoperability Resources). Building and maintaining these interfaces requires dedicated resources and close collaboration with EHR IT teams.

Alert fatigue

Overly aggressive AI alerts can overwhelm clinicians, leading them to ignore or disable the system. Tuning thresholds, providing actionable recommendations, and allowing users to customize notification preferences are essential to prevent fatigue.

Training and change management

Clinicians must understand both the capabilities and limits of an AI tool. Structured training programs, simulations, and peer‑to‑peer mentorship can smooth adoption. Ongoing support—such as a “clinical AI liaison” role—helps address issues that arise in real time.

Economic considerations – beyond the headline cost savings

Financial incentives shape adoption decisions. Stakeholders evaluate return on investment (ROI) not only in terms of direct cost reduction but also in risk mitigation, compliance, and competitive positioning.

Upfront investment

Data cleaning, model development, regulatory filing, and integration each carry substantial one‑time expenses. Small practices may lack the capital to fund these activities without external partners or grant funding.

Reimbursement uncertainty

Current insurance billing codes do not always recognize AI‑enabled services. Without clear reimbursement pathways, providers may view AI as a cost center rather than a revenue generator.

Value‑based care alignment

In payment models that reward outcomes (e.g., bundled payments, accountable care organizations), AI that improves quality metrics can directly enhance profitability. Demonstrating such alignment often requires longitudinal studies, which take time and resources.

Building trust step by step – a practical roadmap

Addressing the barriers does not require solving everything at once. A phased approach lets organizations make measurable progress while managing risk.

- Assess data readiness: Conduct a data audit to identify gaps, standardise coding, and implement data‑quality dashboards.

- Choose a pilot use case: Select a clinical problem with high impact, clear outcome measures, and existing data pipelines (e.g., predicting sepsis in ICU).

- Develop a transparent model: Start with an interpretable algorithm, document feature selection, and generate explanation outputs for each prediction.

- Secure regulatory alignment: Map the chosen use case to relevant FDA, EU, or local regulations. Prepare a step‑by‑step compliance checklist.

- Define accountability: Draft a shared‑responsibility agreement among data owners, AI developers, and clinical users. Include audit‑log requirements.

- Integrate with workflow: Use FHIR APIs to surface predictions within the EHR UI where clinicians already work. Configure alerts to match existing triage thresholds.

- Train staff and collect feedback: Run workshops, provide quick‑reference guides, and establish a feedback loop for continuous improvement.

- Measure impact and iterate: Track pre‑defined metrics (e.g., reduction in length of stay, false‑positive rate) and refine the model or workflow as needed.

Real‑world examples that illustrate the barriers

Several health systems have publicly shared their AI journeys, highlighting both successes and setbacks.

- Boston Children’s Hospital: Implemented an AI tool to predict pediatric asthma exacerbations. Initial rollout failed because the model relied on environmental data not captured in the EHR. After adding linked air‑quality feeds and redesigning the alert UI, adoption increased and readmission rates fell.

- German Cancer Research Center: Developed a deep‑learning system for histopathology classification. The model achieved high accuracy in the lab but could not be exported to the clinic due to GDPR‑mandated data localisation rules. The team built an on‑premise inference engine, preserving data sovereignty and satisfying regulators.

- Rural health network in Texas: Attempted to use a commercial AI platform for diabetic foot ulcer detection via smartphone images. Low internet bandwidth and lack of staff training caused the pilot to be paused. The network later partnered with a local university to create an offline version of the algorithm and conducted in‑person training sessions.

The future is incremental, not revolutionary

Technology will continue to improve—larger models, better federated‑learning techniques, and more robust privacy‑preserving methods. However, the pace of adoption will be set by how quickly the trust barriers are dismantled. Each stakeholder—data custodians, regulators, clinicians, patients, and payers—must see concrete evidence that AI serves their interests without compromising safety or privacy.

When data quality, transparency, accountability, workflow fit, and economic incentives align, AI moves from a novelty to a reliable tool. The journey is gradual, but the milestones are clear. Recognising the real obstacles and addressing them methodically will determine how far health AI can go.